Here’s why we measure time in seconds (and why seconds are the length they are)

This week, Facebook ruffled some feathers with the invention of a new unit of time – the ‘flick’.

Facebook’s ‘Flicks’ are designed to sync with the screen refresh rate of devices, to help programmers produce visual effects, and last just 1/705600000 of a second.

But why do we even count time in seconds at all?

Surprisingly, the history of the second is far, far longer than the history of devices capable of measuring it.

Most popular on Yahoo News UK

Father of top showjumper jailed after smuggling £4 million of cocaine in horse box

Family confronts carer who slapped and abused their dementia-suffering mum on camera

Doomsday Clock moves to two minutes to midnight as Trump stokes nuclear tensions

University student ‘reported Tinder date to police before he stabbed her to death’

Tony Blair’s sister-in-law backs calls for chemical castration for sex offenders

So who decided how long a second is? And why do we still use the measurement today?

Why seconds count

Throughout human history, measuring time accurately has been key to technological innovations – with demand for mechanical clocks spurring manufacturing innovations in the industrial revolution.

In the 19th century, pendulum clocks, accurate to the second, allowed the development of road and railway networks, and timed the shifts of factory workers.

In today’s hyper-connected world, where communications are measured in 1/10 of a nanosecond, accurate atomic clocks keep the world working.

In the future, clocks far more accurate than the ones we use now may steer human beings towards Mars, and even beyond our solar system.

Why sixty?

But the idea of ‘seconds’ – or at least, of units of 1/60 – dates from millennia before Jesus Christ.

The basic idea of dividing measurements into sixty came from the ‘sexagesimal’ maths of the ancient Sumerians, three thousand years BC.

It was adopted by the ancient Babylonians, using ‘base 60’ (rather than the 10 we use today in decimal).

Some suggest that the system may have come from counting on the hands, using a thumb to tot up the finger joints on each finger.

The sexagesimal system still underpins the way we measure angles, the way we divide the globe and the way we measure time.

Ancient Greek writers such as Archimedes divided circles into 360 degrees, using the same techniques as Babylonian astronomers.

The main method of measuring time was with sundials, so telling the time was still strongly associated with measuring the angles of a circle.

The term ‘second’ comes from Claudius Ptolemy’s ‘Almagest’, where he discussed dividing degrees of latitude and longitude.

The first division, into sixty, was ‘partes minutae primae’ (first minute), and the second was ‘partes minutae secondae’ (second minute).

The two became known as ‘minutes’ and ‘seconds’.

Measuring the second

But it wasn’t possible to measure time in divisions of seconds for many, many centuries after Claudius Ptolemy’s ‘Almagest’ in around 150AD.

In fact, many early clock displays (from the 14th century on) didn’t show minutes, they divided hours into halves, thirds or quarters.

The first mechanical clocks showing minutes appeared at the end of the 16th century.

Dutch mathematician Christiaan Huygens patented a pendulum clock capable of measuring seconds in 1656.

Pendulum clocks were expensive, large and not portable (different mechanisms had to be used for clocks on ships, for instance).

Being able to tell time in seconds became a status symbol for rich families, until mass production brought clocks down in price.

Solar time

The second was established as a unit of scientific measurement in the 19th century – although it still wasn’t exactly the same second we use today.

The the British Association for the Advancement of Science said in 1862, ‘All men of science are agreed to use the second of mean solar time as the unit of time.’

‘Mean solar time’ is a mathematical calculation based on the passage of the sun over the meridian at noon.

Atomic clocks

But since 1967, the ‘official’ time on Earth – International Atomic Time – isn’t measured using the sun, it’s measured by a network of atomic clocks, and averaged for even more accuracy.

Microwave atomic clocks measure time using electrons orbiting atoms like a pendulum, using microwave signals emitted by electrons.

International Atomic Time is used as the basis for Coordinated Universal Time – which is used to set computer clocks around the world.

The official definition of a second is ‘the duration of 9,192,631,770 periods of the radiation corresponding to the transition between the two hyperfine levels of the ground state of the caesium 133 atom.’

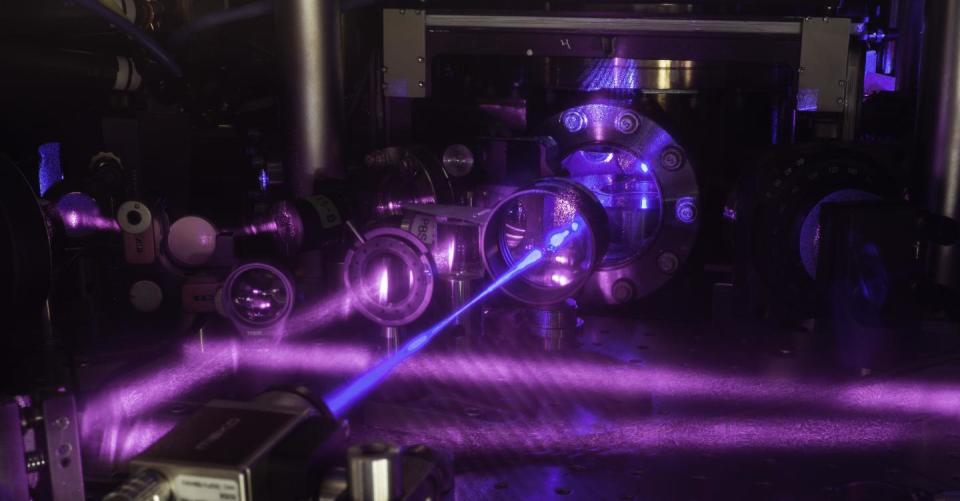

Optical clocks

But new technologies could change the official way we measure a second, and could be key to new communications technologies.

The optical lattice clock – using lasers and strontium atoms – is accurate to within one second every 300 million years, and is more stable than current atomic clocks.

Optical clocks work similarly to atomic clocks, but measure the oscillations of ions or atoms which vibrate at about 100,000 times the frequency of microwave frequencies.

Such clocks could provide the basis for a new official definition of the second, scientists argue.

Jerome Lodewyck of the Paris Observatory said in 2014, ‘It isn’t the super-long timescales that interests us, but rather the very short ones.

‘Even an accuracy of a second in 300 million years still means a lag of about 0.01 of a nanosecond over the course of a day.

‘That is not really so little when you think about fibre optic communications and realize that a single telecommunications slot is 0.1 of a nanosecond.’

In the future, such clocks might help navigate human beings towards other planets – and even beyond, says Jun Ye of the University of Colorado Boulder.

He says, ‘It’s a key piece of technology that allows us to navigate on Earth as well as, in the future, navigate to Mars and beyond our solar system.’

Yahoo News

Yahoo News