MIT builds robot hand that can ‘see and feel’ objects as fragile as a crisp in major breakthrough

Robotic hands capable of picking up objects as fragile as a crisp by “sensing” objects have been developed by researchers.

Two new tools built by MIT‘s Computer Science and Artificial Intelligence Laboratory (CSAIL) offer a breakthrough in the emerging field of soft robotics – a new generation of robots that use squishy, flexible materials rather than traditional rigid equipment.

These types of soft robots often draw inspiration from living organisms and offer numerous benefits in their versatile functionality. They are able to operate far more delicately than their rigid counterparts, but until now they have lacked the ability to perceive what items they are interacting with.

To overcome this, the researchers equipped their robots with various sensors, cameras and software, allowing them to “see and classify” a range of objects.

“We wish to enable seeing the world by feeling the world,” said MIT professor and CSAIL director Daniela Rus.

“Soft robot hands have sensorized skins that allow them to pick up a range of objects, from delicate, such as potato chips, to heavy, such as milk bottles.”

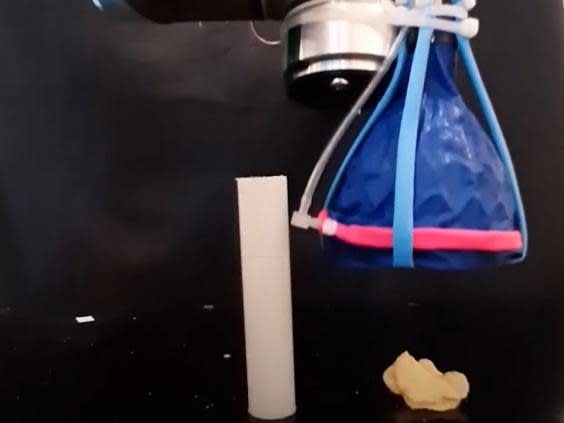

The first robot built of research from MIT and Harvard University in 2019, where a team developed a robotic gripper in the shape of a cone. It worked by collapsing in on an object in a similar way to a Venus flytrap, allowing it to pick up a range of awkwardly shaped objects up to 100-times its weight.

By adding tactile sensors, the robot was able to understand what it was picking up and adjust the amount of pressure exerted accordingly. Of the 10 objects used in the experiment, the sensors were able to identify them with an accuracy rate of more than 90 per cent.

“Unlike many other soft tactile sensors, ours can be rapidly fabricated, retrofitted into grippers, and show sensitivity and reliability,” said MIT’s Josie Hughes, the lead author of a paper detailing the sensors.

“We hope they provide a new method of soft sensing that can be applied to a wide range of different applications in manufacturing settings, like packing and lifting.”

The second robot made use of an innovative “GelFlex” finger, which uses a tendon-driven mechanism and an array of sensors to provide "more nuanced, human-like senses".

The team now hopes to fine-tune the sensing algorithms and introduce more complex finger configurations, such as twisting.

Both robots are detailed in a pair of papers, which will be presented virtually at the 2020 International Conference on Robotics and Automation.

Read more

Artificial eye that ‘sees’ like a human could transform robotics

Yahoo News

Yahoo News