Police hail ‘value’ of face scanning technology in legal action against its use

Facial recognition technology offers significant value to the public by identifying known individuals to the police, a court has heard.

Lawyers representing a police force leading the way with Automatic Facial Recognition (AFR) argued it does not interfere with human rights and is used in the same way as CCTV cameras.

Jeremy Johnson QC, representing South Wales Police, presented the force’s justification for the face scanning technology in the world’s first ever legal challenge against its use.

South Wales Police have used #FacialRecognition tech on the streets against the public on more than 50 occasions. Ed Bridges has been scanned and had his biometric data snatched twice. We're in court with Ed today to stop unlawful police use of the tech.#ResistFacialRec pic.twitter.com/24GM4Jm2lf

— Liberty (@libertyhq) May 21, 2019

Office worker Ed Bridges, 36, from Cardiff, crowdfunded the legal action claiming his face was scanned while doing Christmas shopping in 2017 and at a peaceful anti-arms protest in 2018, and by doing so it breached his human rights and the Data Protection Act.

But Mr Johnson said the police force’s use was justified as it deterred people from carrying out crime, is similar to police use of CCTV, and information of a person’s face is not stored unless police already have the image of a known individual in their “watch list”. The list comprises of known offenders, those on suspicion of offending, and persons of interest.

Mr Johnson told the Administrative Court in Cardiff on Wednesday: “AFR is a further technology that potentially has great utility for the prevention of crime, the apprehension of offenders and the protection of the public.

“If AFR has a deterrent effect then it achieves its purpose.

“It offers significant value to the public and to public interest.

“The extractions of information of the images is nothing more than a person looking at two photographs and comparing them.

“So far as an individual is concerned, there is no difference in principle knowing you’re on CCTV and someone looking at it, or that is being done automatically in a millisecond.

“There is no continuing effect on the individual. It is deleted 24 hours later presuming there is no match. No human operator sees any of it.”

Mr Johnson said the two deployments of AFR where Mr Bridges claims his face was scanned were “justified” and resulted in police successfully identifying three people.

He said: “No personal information relating to the complainant was shared with any police officer. He was not spoken to by any police officer. The practical impact on him was limited.

“On each occasion AFR was being operated in an entirely transparent way and there has been no complaint apart from in these proceedings.

“Operation Fulcrum led to two arrests. The demonstration deployment identified a person who had made bomb threats at the very same event the previous year and subjected to a custodial sentence.

“It is of obvious value to policing to know that person is there so if another bomb threat is made you can deal with it accordingly.

“But it did not interfere with the complainant’s rights or anyone else there.”

Mr Johnson added the trial of AFR, which South Wales Police began in 2017, had not proved to be a “game-changer and a complete success”.

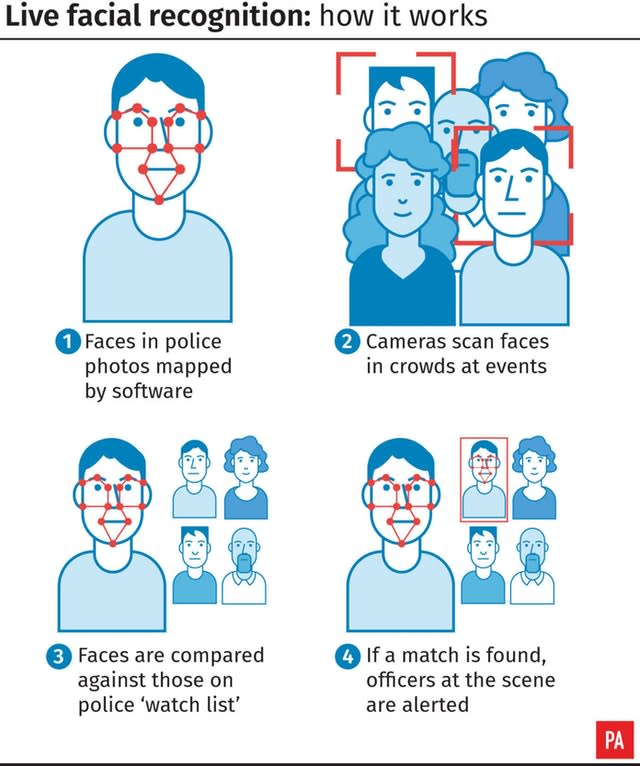

Facial recognition technology maps faces in a crowd by measuring the distance between facial features then compares results with a “watch list” of images, which can include suspects, missing people and persons of interest.

Yahoo News

Yahoo News