The human side of technology: how AI research is putting patients centre stage

Most people with eating disorders such as anorexia nervosa never seek help, says Dr Dawn Branley-Bell, a cyberpsychologist at Northumbria University. “But what is shocking is that eating disorders have the highest mortality rate of all mental health conditions.”

If more sufferers could be encouraged to seek support, might this change? It’s a question Branley-Bell hopes to answer through her research into how technology can improve health and wellbeing, and is part of a wider focus at Northumbria University examining the potential of artificial intelligence (AI) to transform healthcare.

The university’s new Centre for Doctoral Training in Citizen-Centred Artificial Intelligence, which has received £9m in government funding through UK Research and Innovation, will enable doctoral students to specialise in areas such as AI for digital healthcare, robotics, decision making and sustainability. The centre brings together academics from across the university and will focus on the inclusion of citizens in the design and evaluation of AI.

Branley-Bell has been looking at whether AI could be used, in some cases, as a first port of call for people searching for medical information online.

AI-powered chatbots, for instance, could help individuals seek medical help sooner for conditions that they feel too embarrassed to see a doctor about, her research has found. So while, in general, most people would rather speak face to face with a professional, in some instances chatbot technology, and the anonymity it offers, would prompt people who might not otherwise look for it to seek support. “You’ve got that first step through the door. A chatbot can reassure you that you’re not alone and direct you towards help,” says Branley-Bell, who adds that this technology may be useful for helping people who have an eating disorder.

AI chatbots are no replacement for doctors and medics, she says. They need to be constantly monitored and evaluated, and should never be used to cut costs. “But they have a role – for people who can’t get to a doctor’s during the working day or who have caring responsibilities, or who can’t travel.” And the reality of lengthy waiting lists means that people can be supported while they wait for treatment and services.

The new centre, one of 12 for doctoral training in artificial intelligence across the UK, will grapple with the ethical considerations of using the technology across a range of areas, including health and wellbeing.

The potential of AI in health scenarios is exciting but fraught with challenges, says Dr Kyle Montague, an expert in human-computer interaction at Northumbria University, who wants to see digital health tools co-designed by the people who will ultimately use them.

With the advent of ChatGPT and other tools, people now have easier access to once-elite technology. “By default, you get a more diverse segment of the population using the technology,” he says. This is both liberating and alarming – individuals risk unwittingly sharing sensitive health and personal information via unregulated apps and generative AI. “All of a sudden you’ve given really powerful tools to people who maybe don’t understand the nuts and bolts behind them.”

Ethical design and transparency are critical, researchers agree. “AI must be explainable,” says Branley-Bell. Typically, she says, complex algorithms have drawn results through opaque processes. Privacy and data security are also obviously key concerns.

The centre is taking an interdisciplinary approach, recruiting its initial tranche of 60 researchers across fields such as nursing, law and social services. “It’s so important to have people from all disciplines who can design AI products, systems and services that are fair, just and responsible,” says Montague. “We want people with lived experience and a good understanding of societal and health problems.”

Related: NHS future workforce: how a university is helping tackle the shortage of nurses

Likewise, his research projects are careful to recruit participants from a wide social and ethnic pool. This is critical, he says – typically those who have the time and resources to take part in research tend to be white and middle class – and this data bias can skew and entrench unfairness if used to train AI tools. With a wider spread of participants, he says, the kinds of AI tools emerging from the centre will be better designed and more responsible, and better serve the people they are designed for.

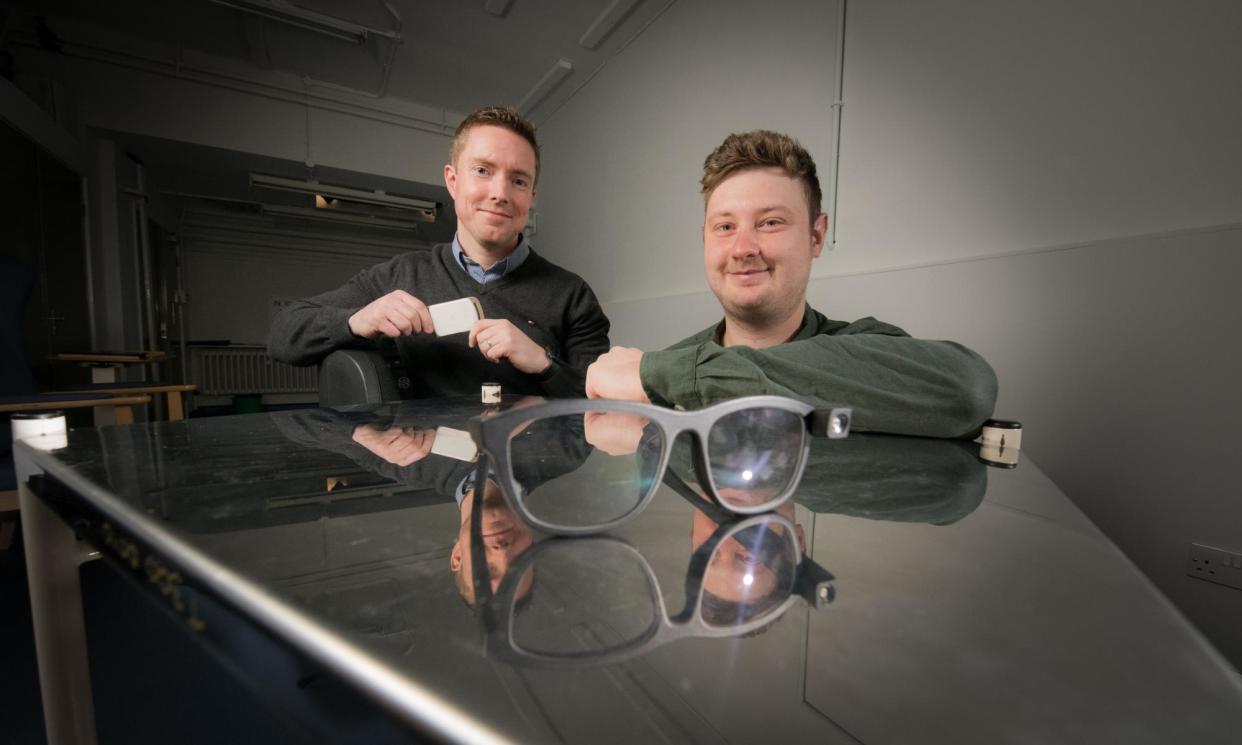

A good example of this is the work he co-led to develop a smartwatch-style device that vibrates to prompt people with Parkinson’s disease to swallow. As the condition affects automatic swallowing, about 80% of people with Parkinson’s find they drool, which can make them reluctant to socialise. Montague had asked support groups to dream up solutions to problems that would make their lives better – and they came up with the wearable device.

After lab tests, the technology – a watch connected to an app – is being tested in daily life by hundreds of people with Parkinson’s. This trial is just the beginning, more existing technologies can be personalised if individuals can explain their own health needs.

AI can also be used in preventive ways, by helping clinicians to get a better idea of what help vulnerable people need, says Dr Thomas Salisbury, an ophthalmology registrar, at Sunderland Eye Infirmary. He is one of NHS England’s Topol digital fellows, a team of health and social care professionals leading digital health transformations and innovations in their organisations.

Working with Dr Alan Godfrey and Jason Moore from Northumbria University’s Digital Health and Wellbeing group, Salisbury is looking at how elderly people with diminished sight navigate their homes. Their work uses ethical AI tools to highlight risks by analysing footage from headcams and data from accelerometers worn by individuals to monitor movement.

In the recent past, this detailed analysis would only have been done in an artificial environment or would have had to be manually reviewed by researchers, but technology can now speed up this process. The study ultimately will help medics and therapists advise the elderly how to move more safely about their homes, with a view to preventing falls. “An AI algorithm can generate insights and spot patterns that people can’t necessarily find,” Salisbury explains.

With AI having the ability to detect diseases at an earlier stage and provide solutions that could reduce the burden on healthcare professionals, it offers enormous potential – and striking a balance between its potential benefits and the challenges that come with those is at the forefront of thinking at Northumbria.

Find out more about how Northumbria University is driving change and inspiring potential

Yahoo News

Yahoo News