Which London universities ban ChatGPT and AI chatbots?

AI bots are no longer future tech. They are here.

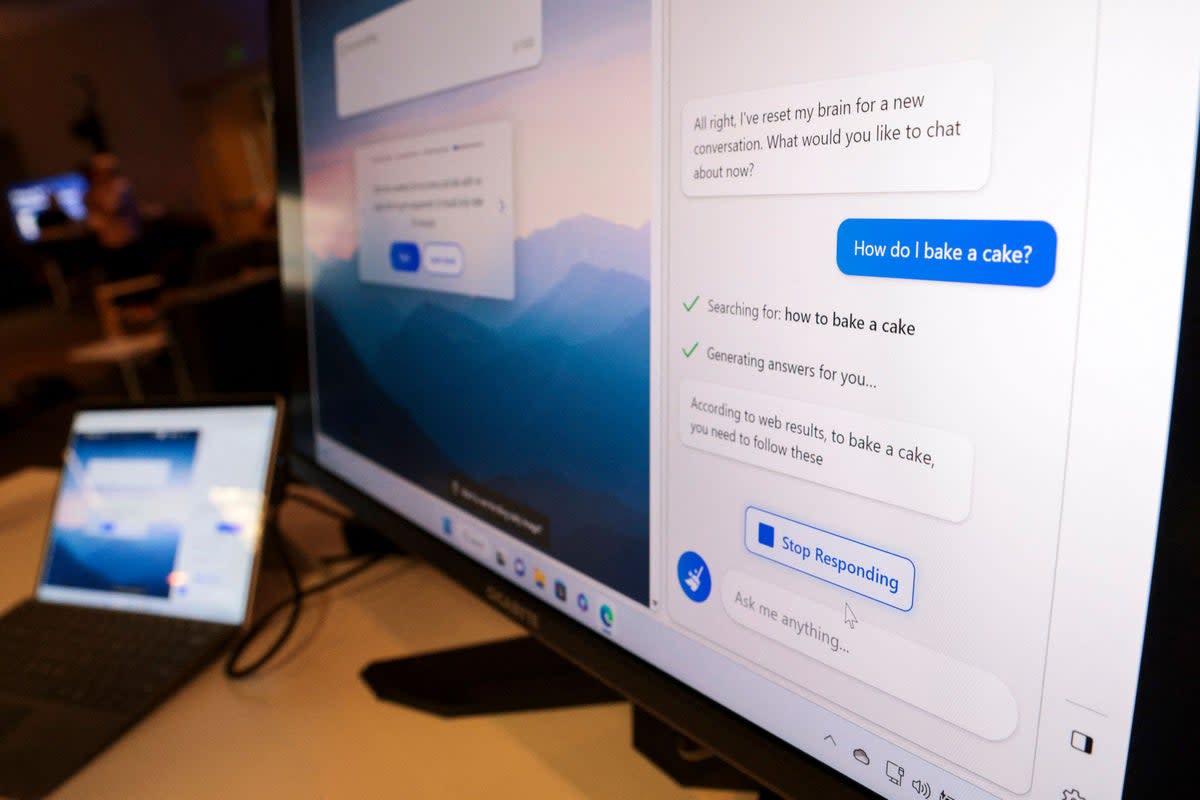

Microsoft’s Bing search engine has an AI-powered chatbot, Google has Bard. A close relative of the Bing bot, ChatGPT, has been making headlines for months. Educational institutions are concerned students are using these chatbots to write essays for them because, well, they are.

However, an outright ban on AI tools is a too-regressive step, according to some universities. As a student suggested in a recent ES Insider article, chatbots can be handy for collating research. This can reduce the busy work of a certain stage of essay writing, without removing the more intellectually challenging parts.

There are issues here too, though, as in their current state, AI chatbots often make things up. It’s now trendy to equate chatbots with the autocomplete function of your phone’s keyboard. Still, these tools will only get stronger, and are not going anywhere.

But what do London universities say about the use of chatbots by students?

Imperial College London

Imperial College London is upfront in how it describes the use of AI-generated content.

“Submitting work and assessments created by someone or something else, as if it was your own, is plagiarism and is a form of cheating, and this includes AI-generated content,” reads the Imperial College website.

The university calls plagiarism a “major-level offence”, and suggests “the only penalty appropriate for major plagiarism in a research degree thesis is expulsion from College and exclusion from all future assessment.”

To use chatbots for submitted work at Imperial is to play with fire.

“To ensure quality assurance is maintained, your department may choose to invite a random selection of students to an ‘authenticity interview’ on their submitted assessments. This means asking students to attend an oral examination on their submitted work to ensure its authenticity, by asking them about the subject or how they approached their assignment.”

This viva-style method is a classic way of ensuring a student understands their own work, but Imperial College also uses digital tools to recognise copied work.

UCL

Taking a rather more positive approach than most London Universities, UCL says it seeks to guide students’ use of AI tools, rather than banning them.

“We believe these tools are potentially transformative as well as disruptive, that they will feature in many academic and professional workplaces, and that rather than seek to prohibit your use of them, we will support you in using them effectively, ethically, and transparently,” its website reads.

However, it all gets a bit hazy when it comes to what that means in real terms.

“You also need to be aware of the difference between reasonable use of such tools, and at what point their use might be regarded as giving you an unfair advantage.”

What counts as an “unfair advantage” when we are dealing with often freely available tools?

“Using AI tools to help with such things as idea generation or your planning may be an appropriate use, though your context and the nature of the assessment must be considered. It is not acceptable to use these tools to write your entire essay from start to finish.

“Also, please bear in mind that words and ideas generated by some AI tools make use of other, human authors’ ideas without referencing them, which, as things stand, is controversial in itself and considered by many to be a form a plagiarism,” says UCL.

Despite UCL’s more moderate approach, students should tread carefully when improper use of chatbots will be considered a form of plagiarism.

LSE

The London School of Economics does not have any specific AI chatbot guidance on its website that is accessible to the public. However, it has provided us with a statement on its position on the use of these tools.

“LSE acknowledges the potential threat that generative Artificial Intelligence Tools (AI) may pose to academic integrity, and takes a pro-active and methodical approach to the prevention and detection of assessment misconduct. We take a firm line with any students that are found guilty of committing assessment misconduct offences,” says the LSE spokesperson.

“The School has released guidance for course convenors in the current academic year and is convening a cross-School working group with members of academic and professional staff and student partners to explore possible impacts and how we might embrace the potential of these tools in teaching, learning and assessment in the future.”

This suggests that, as in most other universities, students who submit assessed work generated by a chatbot will be in big trouble.

Queen Mary University of London

While Queen Mary University of London does not explicitly reference AI tools in its code of conduct, getting a chatbot to write essays or other submitted works will fall under more general wording seen here:

“The use of ghost writing (e.g. essay mills, code writers etc.) and generally using someone external to the institution to produce assessments is an assessment offence,” reads the student handbook.

Like Oxford University, Queen Mary also makes use of plagiarism-detector platform Turnitin,

“Be aware that technology, such as Turnitin, is now available at Queen Mary and elsewhere that can automatically detect plagiarism.”

While Turnitin isn’t yet ready to detect AI-generated passages, it soon will be. The company hopes to start integrating this form of monitoring into its software from April, and claims a 97 per cent success rate when identifying ChatGPT content. Microsoft’s Bing chatbot is derived from the same technology, made by OpenAI.

There is a one per cent false-positive rate, though, which could make for some interesting appeals claims.

King’s College London

King’s College does not offer specific guidance on its approach to AI bots, and it did not reply to our request for comment. However, as seen elsewhere, the wording of the university’s plagiarism policy references the unethical use of chatbots in academic work. Here’s the crucial paragraph:

“The College’s definition of plagiarism does not include intention because this is difficult to identify. This means if you submit a piece of work which, for example, contains ideas from other sources which you have not referenced, or includes the exact words of others without putting them in quotation marks, it would still be considered as plagiarism regardless of whether you intended to do so,” reads the University’s student guidance document.

“It is important therefore that you fully understand plagiarism and how to reference correctly to ensure it doesn’t happen by accident.”

King’s College also uses the anti-plagiarism platform Turnitin, as part of its KEATS (King’s E-Learning and Teaching Service) platform, so will soon be able to track down AI-dervied works, according to Turnitin.

Yahoo News

Yahoo News