Computer modeling and tiny meteorites suggest that CO2 blanketed ancient Earth

Today, rising carbon dioxide in the atmosphere is a cause for concern, but 2.7 billion years ago, high levels of CO2 probably kept our planet warm enough for life even though the sun was about 20% fainter than it is today.

A newly published study, based on analyses of ancient micrometeorites and a fresh round of computer modeling, estimates just how high those CO2 levels were. The likeliest level is somewhere in excess of 70% CO2, scientists from the University of Washington report today in the open-access journal Science Advances.

Based on the modeling, global mean temperatures would have been in the mid-80s Fahrenheit (roughly 30 degrees Celsius).

All that is good news for astrobiologists, because such an environment matches up well with the picture that scientists have of Earth during what’s known as the Archean Eon. The high CO2 levels wouldn’t be livable for us humans, but they’d be fine for the early organisms that ruled the Earth before oxygen levels rose.

The findings “could also inform our understanding of Earth-like exoplanets and their potential habitability,” said the study team, led by UW researcher Owen Lehmer.

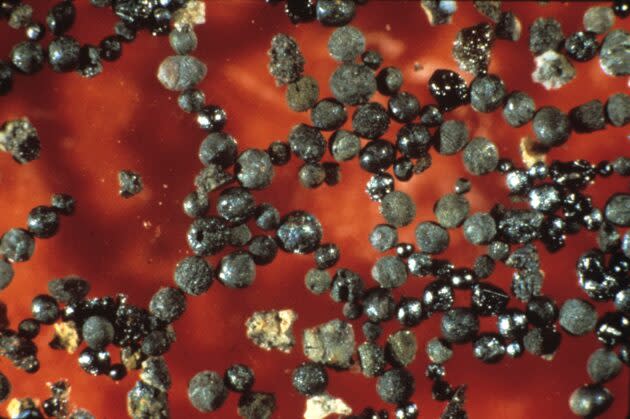

Lehmer and his colleagues started out with a chemical analysis of tiny beads of metal that were found in 2.7 billion-year-old limestone from northwestern Australia. Those iron-rich bits came to Earth as micrometeorites from space. As they fell through the atmosphere, the bits heated up to become droplets of molten metal, and then congealed into beads that are about the size of grains of sand.

While the micrometeorites were in their molten state, they reacted chemically with gases in the atmosphere. Some of the iron turned into oxidized minerals such as wüstite and magnetite. By analyzing the extent of the oxidation, and making some assumptions about atmospheric composition, scientists can estimate how much of which gases were present at the time.

An earlier study by a different team of researchers assumed that the micrometeorites reacted primarily with oxygen gas, but that led to conclusions that were out of sync with other evidence about early Earth’s environment and atmospheric mixing. The UW scientists went with a different approach, assuming that carbon dioxide was the primary oxidant.

When the research team ran the numbers, they found that a wide range of CO2 concentrations could explain the oxidation levels seen in the micrometeorites — as little as 6%, or as much as 100%. But because some of the metal bits were fully oxidized, levels in excess of 70% provided the best fit for the data.

“Our results are more consistent with what other measurements and models of the Archean Earth predict, that atmospheric CO2 was likely abundant,” Lehmer told GeekWire in an email..

Obviously, we’re not seeing levels that high today (thank goodness). Over the course of our planet’s history, CO2 concentrations have decreased to a few hundred parts per million (0.04% by volume and currently rising).

The UW researchers said the levels of unoxidized iron should have risen in ancient times as CO2 levels fell, at least up to the time when oxygen levels became significant — in effect, providing a method to measure the evolution of Earth’s atmosphere. “To verify this hypothesis, additional micrometeorites should be collected and analyzed,” they wrote.

Lehmer said the team’s findings could have implications for the study of exoplanetary atmospheres in the years ahead.

“When looking for habitable exoplanets, it is definitely important to consider CO2-rich planets as possible targets,” he told GeekWire. “Such planets may be similar to early Earth. Unfortunately, CO2 can also exist on uninhabitable planets without life (both Mars and Venus have dominantly CO2 atmospheres) so any exoplanet detections will need context to interpret measurements of atmospheric CO2.”

In addition to Lehmer, the authors of the paper appearing in Science Advances, “Atmospheric CO2 Levels From 2.7 Billion Years Ago Inferred From Micrometeorite Oxidation,” include David Catling, Roger Buick, Don Brownlee and Sarah Newport.

More from GeekWire:

3-D climate modeling could fine-tune the search for faraway signs of alien life

Exoplanet climate analysis pinpoints best place to live in TRAPPIST-1 star system

Startup Spotlight: Nori is creating a blockchain marketplace open to all to reverse climate change

How climate change choked ancient life to death — and why it could happen again

Yahoo News

Yahoo News