Here are all the steps social media made to combat misinformation. Will it be enough?

Four years ago, foreign actors leveraged social media to interfere in the US presidential election. This year, too, misinformation is among the greatest threats to American democracy, experts warn.

With conspiracy theories such as QAnon flourishing, a president who regularly uses social media platforms to demonize his opponents or spread falsehoods about the election process, and a federal government that has done little to combat foreign election interference online, tech platforms’ responsibility in the 2020 election process has only grown.

Reeling from criticism they have in past years failed to act decisively to curb those threats, major tech platforms such as Facebook and Twitter have announced broad steps to combat misinformation ahead of the 3 November vote.

Related: Twitter and Google join Facebook in tightening rules on US election claims

The measures range from changing algorithmic recommendations to limiting users’ abilities to share falsehoods. But some experts are doubtful the changes, enacted as hundreds of thousands of Americans have already cast votes, are sufficient. Others have criticized the lack of transparency into how these changes are applied.

“I am concerned right now the future of our democracy is in the hands of very few people at these tech companies,” said Lisa Fazio, a professor at Vanderbilt University who studies the spread of misinformation.

As mail-in ballots pour in from around the United States, here are notable changes in policy and practices from the biggest social media platforms affecting discourse around the elections.

Facebook and Instagram: ‘too little too late’

Facebook has instituted a slew of new policies around the electoral process and misleading claims by politicians. The measures also apply to Instagram, which is owned by Facebook.

The company placed an outright ban on content that seeks to intimidate voters or interfere with voting, after Trump encouraged his supporters to “watch” people at the polls in a manner some said was threatening.

Since 10 October, it has been featuring information panels and videos at the top of the news feed regarding voting, offering state-specific tips on how to vote by mail in both English and in Spanish.

It launcheda “voter information center” that includes posts from verified election authorities on news related to the voting process, guidance on voter registration, and other information on planning when and where to vote. Facebook said it has helped an estimated 2.5 million people register to vote this year through this center.

It said it would stop accepting new political advertising one week before election day and would stop running political ads indefinitely starting 3 November, the day of the election “to reduce opportunities for confusion or abuse”.

It will label posts from candidates that claim victory prematurely. If a candidate whom is declared the winner by major media outlets is contested by another candidate or party, the platform will show the name of the declared winner in a banner at the top of its pages.

It plans to label posts from presidential candidates with the declared winner’s name and a link to the Voting Information Center.

And it began to label false or misleading posts from public figures, including Trump. In September, Facebook for the first time added a label underneath a post from Trump casting doubt on mail-in voting and encouraging supporters to vote twice, saying that mail-in voting has been historically trustworthy.

Most of the changes, however, are “too little, too late” said Jim Steyer, founder and chief executive officer of children’s online safety nonprofit Common Sense Media. Steyer’s organization was one of several behind the Stop Hate for Profit campaign, which in July persuaded more than 1,000 companies to stop advertising on Facebook due to its content management policies.

“They have allowed the amplification of hate, racism, and misinformation at a scale unprecedented in my lifetime,” he said.

Steyer did concede the addition of voter information centers on Facebook was a positive step toward ensuring a fair and free election. “I do commend them for making accurate voter information available,” Steyer said. “But they should still stop the disinformation more proactively.”

Twitter: good decisions that take too long

Like Facebook, Twitter has instituted measures to combat misinformation and appears to be planning for the event that election results are delayed or contested.

Twitter has created an elections “hub” at the top of the explore page for US users. It will feature news in English and Spanish from reputable sources as well as live streams of major election events.

The platform in October ruled that users may not claim an election win before it is called and cannot tweet claims or orders meant to interfere with election processes.

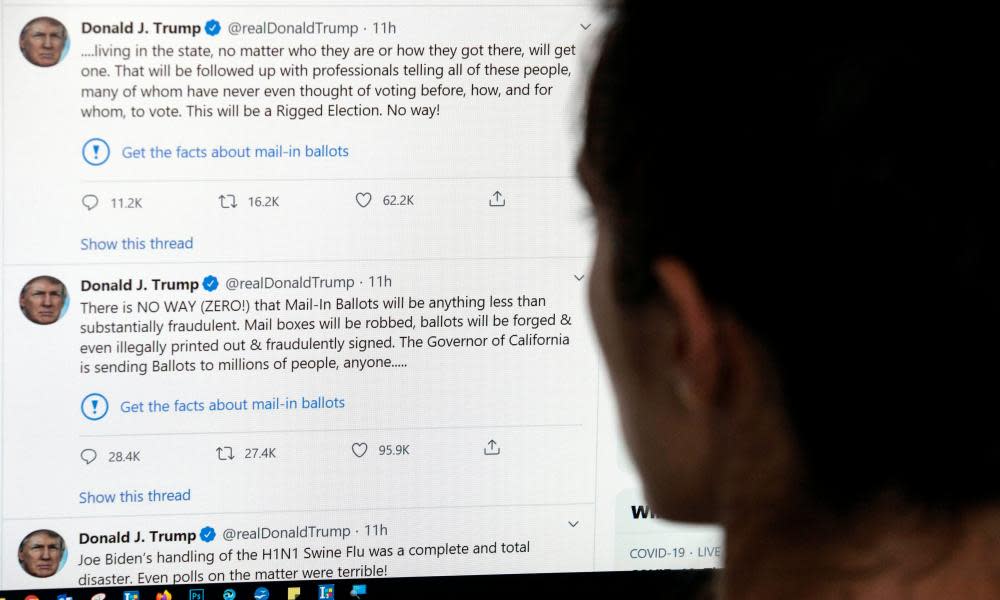

It said it would also remove “false or misleading information intended to undermine public confidence in an election or other civic process”, “disputed claims” that could undermine faith in the process, and “misleading claims” about the outcome of an election. For example, Twitter in September put a “public interest” notice on a Trump tweet that shared misinformation about voting. As part of the policy, Twitter doesn’t allow retweets of such posts . Users may quote-tweet some of them to add context.

The platform has also introduced a new feature that prevents users from retweeting articles without having read them.

Twitter’s measures to limit the shares of misleading election information are likely to have a positive effect on election integrity, said Fazio, the Vanderbilt professor. “It is useful to stop the spread of misinformation and falsehoods, no matter who that falsehood comes from,” she said.

She cautioned it may be difficult to determine which posts are egregious enough to get a warning label and wondered whether the company’s policy to block readers from retweeting articles without having read them is helpful or “just annoying to readers”. Twitter said when it tested the feature on limited users during the summer 33% more people opened the link than did without the warning.

“I’ve been pleased so far with Twitter’s decisions,” Fazio concluded. “They are just often taking too long.”

YouTube: ‘rabbit holes with more extremism’

YouTube has few new policies relating to the 2020 elections, despite its reputation as a hotbed for misinformation and conspiracy theories. The video sharing platform highlighted in a recent blogpost how its existing policies would apply to election-related content, and has been surfacing “information panels” with context on election-related search results on the platform.

YouTube pledges to remove “content that has been technically manipulated or doctored in a way that misleads users (beyond clips taken out of context) and may pose a serious risk of egregious harm”. The most high-profile example of this was when it removed a video of House Speaker Nancy Pelosi that was manipulated to make her appear intoxicated.

The platform also bans content that contains hacked information that may “interfere with democratic processes, such as elections and censuses”.

It also said it will remove content “encouraging others to interfere with democratic processes”, citing an example such as telling viewers to create long voting lines with the purpose of making it harder for others to vote.

Like Facebook and Twitter, YouTube has created a voting information panel to appear on searches related to 2020 presidential or federal congressional candidates. This will include content such as how to register to vote and links to official state websites for more information.

Fazio said YouTube could benefit from changes like those made to Twitter to limit algorithmic recommendations that may expose users to misinformation or radicalized content. “I can see how people think it would be helpful to show related content, but in many cases it is sending users down rabbit holes with more extremism,” she said.

Meanwhile, YouTube on election day will be plastered with ads from Trump after his campaign secured the highly-trafficked ad space with the platform earlier this year.

TikTok: strengthening past efforts

TikTok’s existing disinformation policy prohibits “content that misleads community members about elections or other civic processes”. The company announced measures to “strengthen these efforts” in a blogpost in August.

The measures include an update on its policies to clarify what is allowed on TikTok.

The company, which is Chinese-owned, is also working with the Department of Homeland Security to protect against foreign influences on TikTok.

The platform already partners with the World Health Organization to create pop-ups citing credible information on coronavirus related content to prevent the spread of misinformation. It has expanded its partnerships to “help verify election-related misinformation” and now works with third-party factchecking organizations, including the Poynter Institute and its MediaWise program, Science Feedback, Lead Stories, and Vishvas News to do so.

Like other platforms, it has launched an in-app guide to the 2020 elections, telling users how to vote and connecting them with additional information from trusted sources such as BallotReady and the National Association of Secretaries of State.

Yahoo News

Yahoo News